Once a respondent source is live, the field report is the main tool for understanding what is actually happening to the people who enter your survey. It breaks the source down by status, by the last question each respondent saw, by device, and by quota — so you can tell the difference between a source that is simply slow, one that is screening out too aggressively, and one where a specific question is driving people away.

Opening the field report

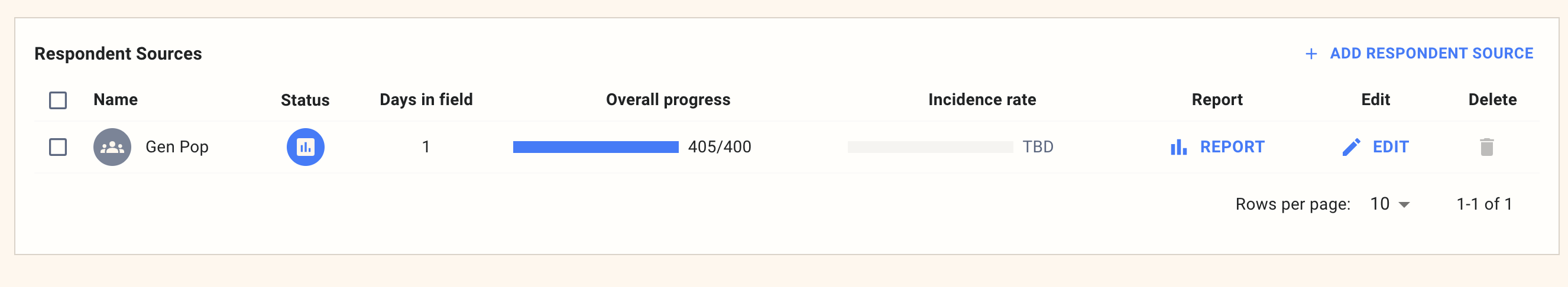

The field report is reached from the Respondent Sources list on the Summary tab. Each source has a Report button in the Report column — click it to open the field report scoped to that source.

The report opens with three tabs — Status, Terminations, and Quota Terms — and an optional report window filter in the top right, so you can restrict the view to a specific date range if you want to compare a recent window against earlier performance.

Status

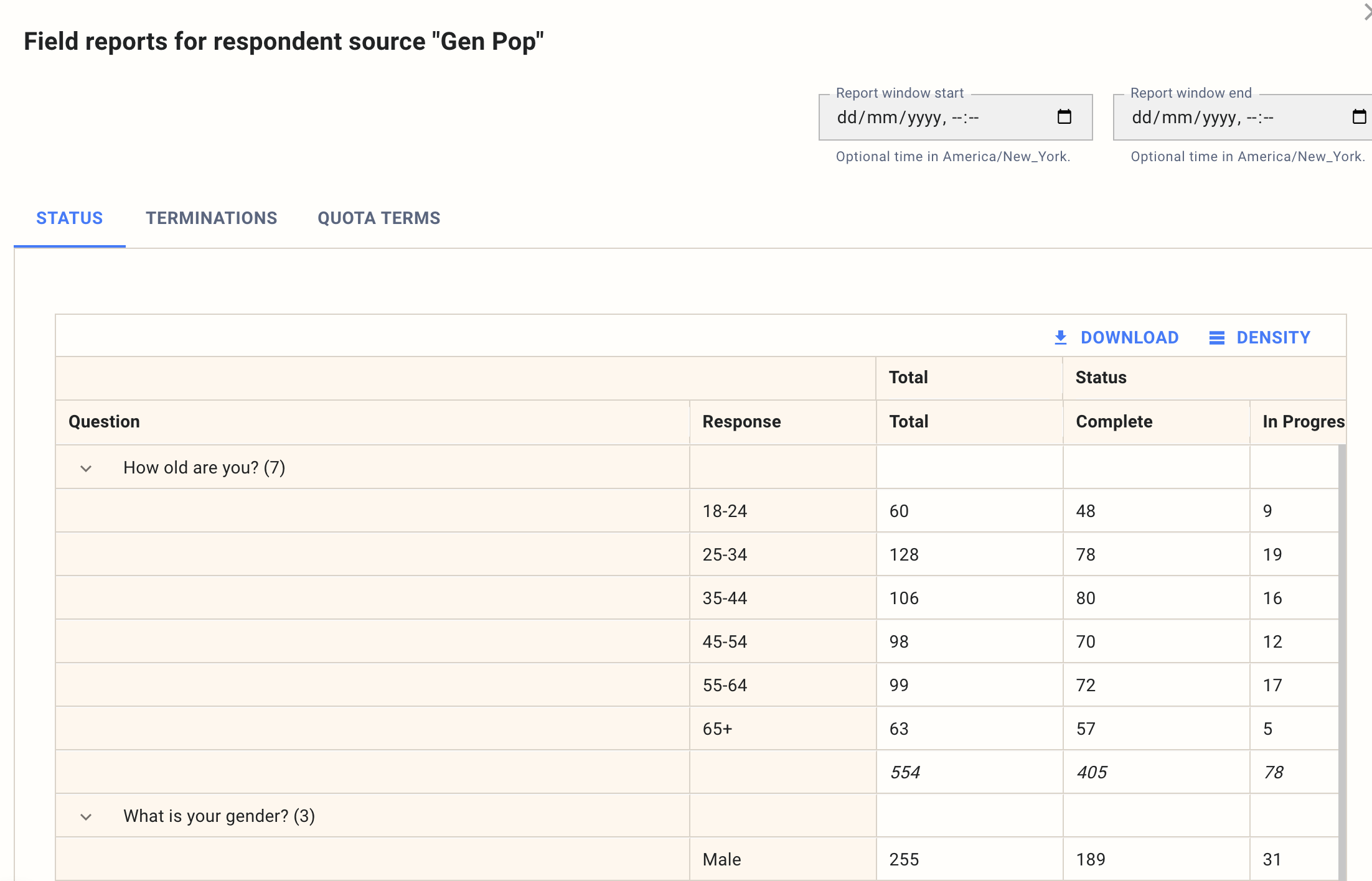

The Status tab is the headline. It groups respondents by the answers they gave to each demographic question in the survey and shows a count for every status bucket alongside each response.

The status buckets are:

- Total — everyone the source has sent into the survey, including those still in progress. This is the denominator for every other number on the page.

- Complete — respondents who finished the survey and passed quality checks. These are the responses that count toward your target.

- In Progress — respondents who are currently in the survey. Once field is closed, treat this bucket as dropouts: anyone still marked in progress never finished.

- New — respondents who arrived from the source but have not answered a single question yet. A large New bucket on a mature source usually means people are bouncing off the landing page.

- Poor Quality — respondents removed by fraud detection or deduplication. A spike here is a flag to investigate the source before continuing to spend.

- Quota Screenout — respondents who answered honestly but did not qualify for any remaining open quota. This is a normal and expected outcome; it tells you the quota is doing its job.

- Terminated — respondents sent to a specific termination point by your survey logic, typically because they failed a screener.

Reading these side by side is usually enough to classify the issue. High Terminated means the screener is tight; high Poor Quality means the source has a fraud problem; high In Progress (once field is done) or high New means the survey itself is losing people before they engage.

Terminations

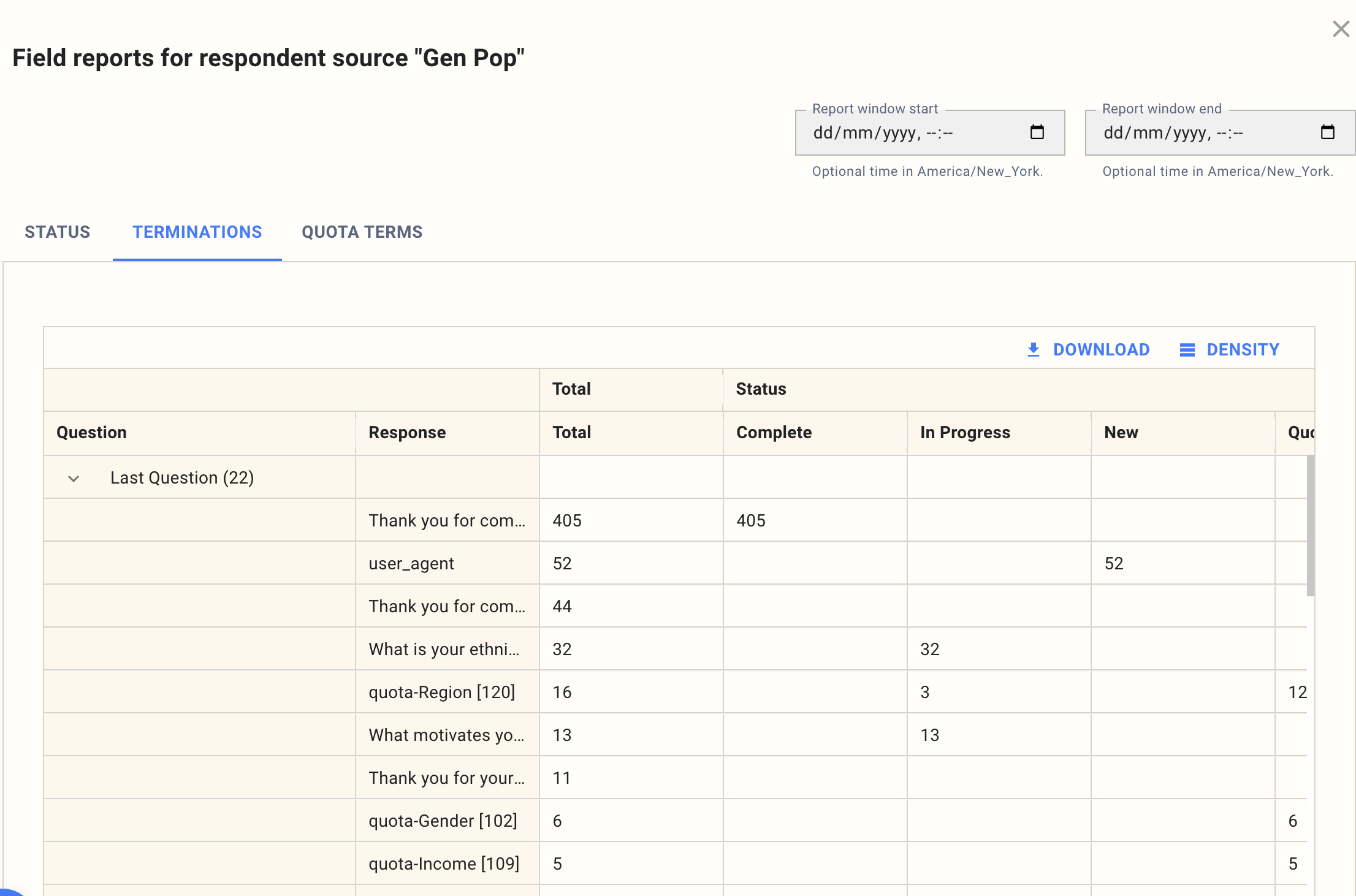

The Terminations tab is the most important part of the field report and the one to reach for first whenever a source is underperforming. It takes the people who did not complete and groups them by where they left the survey, so you can see exactly which question is driving the loss. The tab has two expandable sections — Last Question and Device — and you can open either independently.

By last question. The Last Question section shows the last question each respondent saw, sorted by how many people stopped there. Two patterns matter.

If a cluster of respondents is terminating at a specific screener or quota question (for example quota-Region or quota-Gender in the example above), the screener is working — but you can judge whether it is tighter than you expected and decide whether to relax it. If a cluster is stuck as In Progress on a specific non-screener question, that question is almost certainly the problem: too long, confusing, or asking for something people will not give. Respondents who show as In Progress on this view should generally be read as dropouts rather than people still actively answering — the column surfaces the last question they engaged with before leaving.

The user_agent and Thank you for com... rows are worth calling out: the former captures respondents removed at the device-fingerprint check and the latter captures successful completes, so seeing a large number against either is expected rather than a problem.

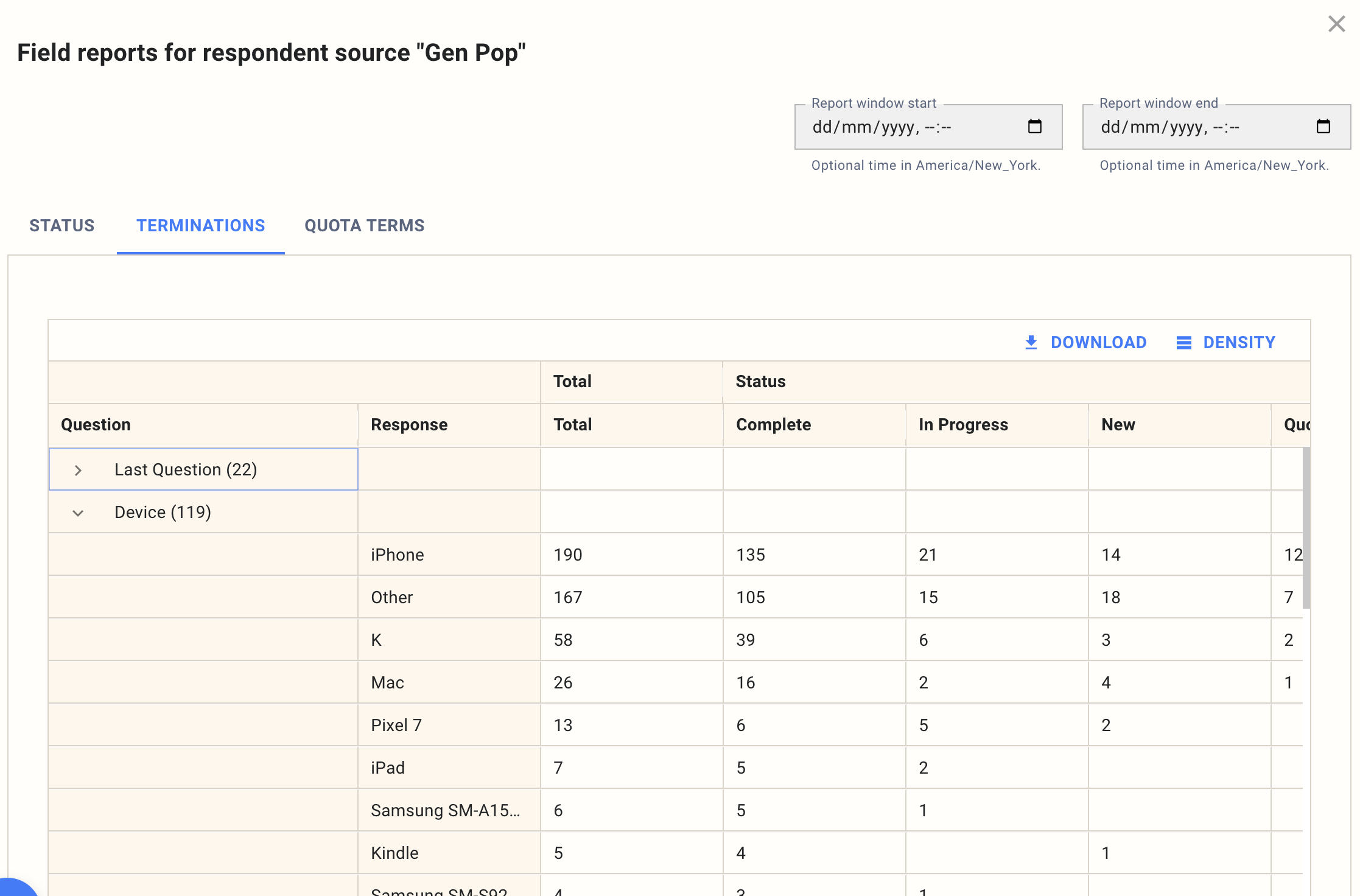

By device. Expanding the Device section shows the same status counts but grouped by the device each respondent was using — iPhone, iPad, Mac, specific Android handsets, Kindle, and so on.

If one device type is over-represented in dropouts or terminations, the survey has a rendering or interaction problem on that device. Media questions, grids, and long open ends are the usual culprits on mobile. A device-specific spike is almost always a fixable survey issue rather than a sample issue.

Between the two sections, you can usually identify whether the problem is a specific question, a specific device, or a combination of the two.

Quota Terms

The Quota Terms tab applies the same status breakdown, but grouped by quota line instead of by demographic answer. It is the least used of the three tabs — most of the time the Status and Terminations tabs answer the question you have — but it is useful when you are running a quota-heavy study and want to see whether a particular quota is closing faster than expected or screening out a disproportionate share of its incoming traffic.

If a quota is filling much faster than the others, check the source definition and whether that quota is drawing from a different pool. If a quota is both slow and has a high screenout rate, the screener criteria may not match what the sample provider can deliver for that audience.

The report window filter

The Report window start and Report window end date pickers at the top of every tab limit the counts to respondents who entered the source within that window (in America/New_York time). Leave them blank to see the full lifetime of the source. The window is most useful when you have made a change mid-field — tightened a screener, paused and restarted a source, fixed a broken question — and want to see only what has happened since.

Acting on what you see

A few rules of thumb for using the report while a source is live:

- Give any new source at least 50 to 100 entries before acting on the numbers. Small-sample termination rates move a lot in the first few hundred respondents.

- If terminations are concentrated on a screener or quota question, that is working as intended — decide whether the screener is calibrated correctly rather than treating it as a problem.

- If dropouts are concentrated on a specific non-screener question, fix the question before spending more on the source.

- If a device type is over-represented in dropouts, test the offending question on that device and adjust before continuing.

- If Poor Quality is elevated, pause the source and investigate before continuing to spend.

For the headline incidence rate metric that sits alongside the field report on the Summary tab, see How to set up respondent sources.