Most research teams file MaxDiff and choice-based conjoint under "Advanced Analytics" — the bucket of techniques that needs a stats specialist and a separate tool after fielding closes. The routine is familiar: download the raw data, open SPSS or a dedicated Hierarchical Bayes tool, set up the model, wait for it to run, wrangle the output into a shape your team can read. By the time the utilities reach the people who actually needed them, the survey closed days ago.

That step is gone in MX8. Hierarchical Bayes runs on the platform, automatically, the moment your fielding closes. Utility scores and simulated share-of-preference are available in the same cross-tab reports as the rest of your data.

What Was Already Hard

Running MaxDiff or conjoint isn't intellectually hard — the methodology has been standard practice for decades. What's hard is the operational tax. A typical end-of-study workflow goes something like this: export the respondent data from your survey platform, import it into Sawtooth or a Bayesian library, configure the estimator, run the chains, retrieve the posterior samples, summarize them into per-respondent utilities, push those back into your reporting tool, and finally — assuming everything reconciled — share the results.

That workflow gets you the right answer. But every handoff is a place where things go wrong: respondent IDs that don't match, weighting schemes that get applied differently in two tools, scaling conventions that aren't consistent across runs. And every handoff costs time. The headline result your stakeholders wanted on Monday lands on Thursday, after a DP analyst has finished untangling it.

What's Now Native

MX8 now runs the full Hierarchical Bayes pipeline inside the platform. The moment a MaxDiff or conjoint survey closes, posterior samples are estimated, individual-level utilities are persisted, and downstream report outputs become available with no manual step.

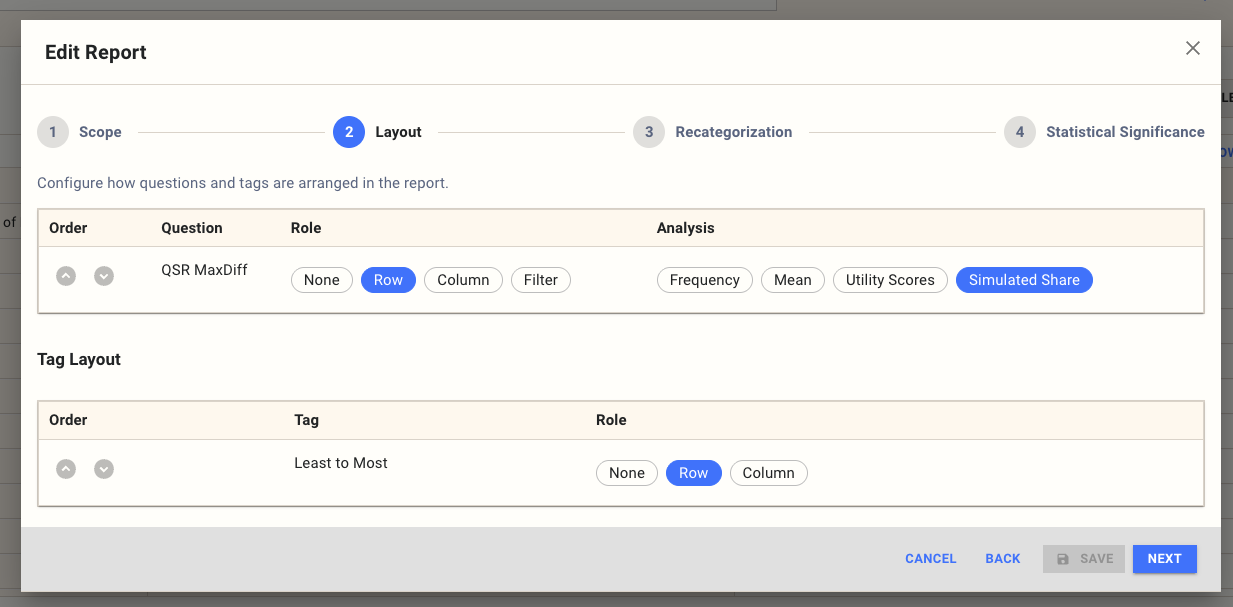

In the Edit Report dialog, set the question's Analysis style:

Two new options join the familiar Frequency and Mean:

- Utility Scores — a posterior-based score per item (for MaxDiff) or per attribute level (for conjoint), showing how strongly it drives choice.

- Simulated Share — the predicted share of preference across items or attribute levels.

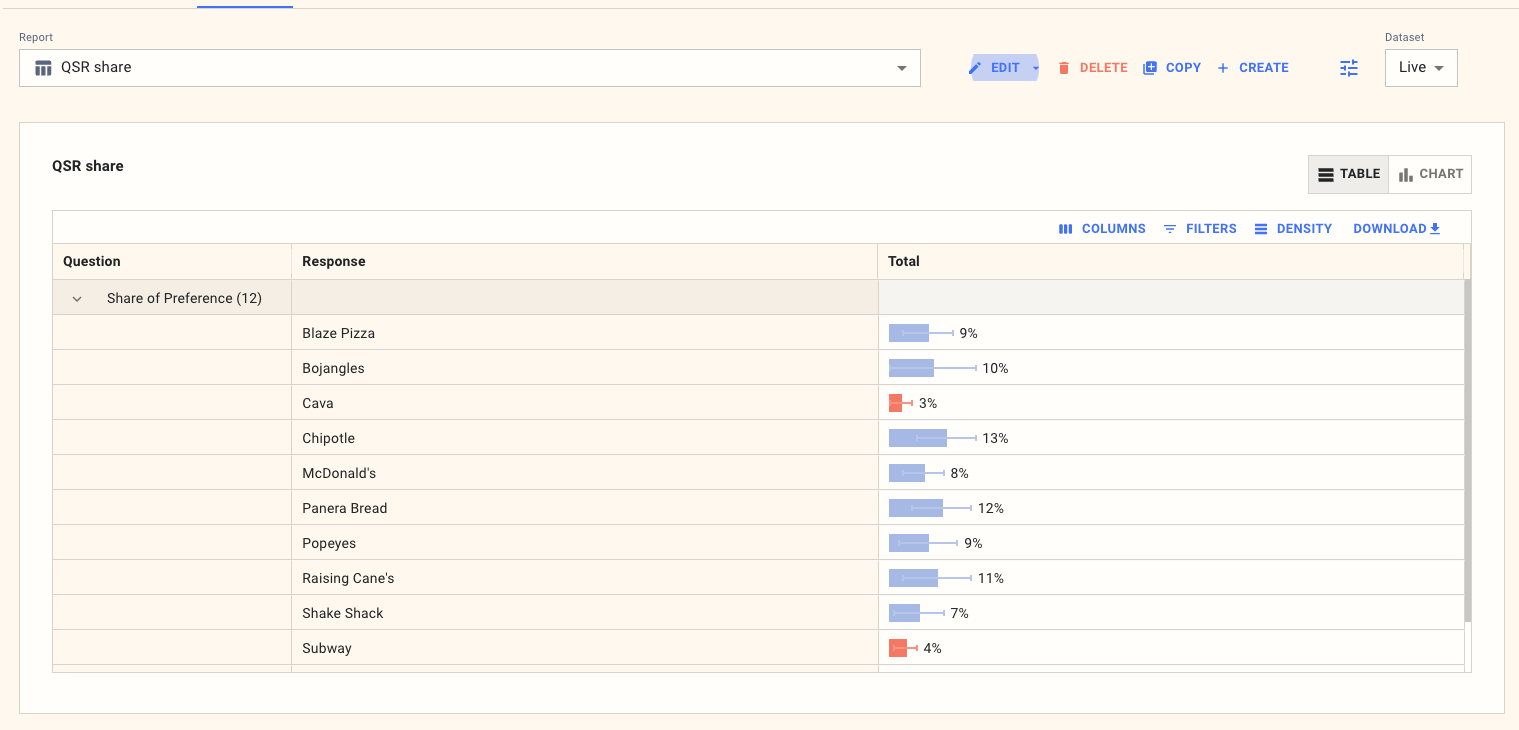

Open the report and the result is already there:

Each row is one item; the bar is its modeled share; the whiskers show uncertainty; significance highlighting flags cells where the posterior places them meaningfully above or below the rest. Cut by demographics, segments, or any tag you defined on the survey — the same way you would for any other cross-tab.

What This Means In Practice

Two practical wins drop out of moving HB into the platform.

The first is that an entire data-processing step disappears from the schedule. You don't export anything to run HB. You don't open a second tool. You don't reconcile respondent IDs across systems. The DP step that used to sit between fielding and reporting is no longer there, because the model has already run by the time you look at the report.

The second is that everything HB produces is weighted to the same scheme as everything else in your study. Utility scores and simulated share use the same respondent universe, the same calibration targets, and the same effective-sample-size machinery that the rest of your reports use. No separate weighting pass; no chance of two tools applying weights differently and producing inconsistent numbers. If your weighting scheme changes mid-study, the utilities and shares update with it.

For analysts who still want full draw-level access — for custom uncertainty work, simulators built outside the platform, or convergence diagnostics — both the respondent-level utilities and the raw posterior draws are exportable as well. The platform doesn't lock you in; it just lets you skip the legwork when you don't need it.

When to Use It

Anywhere you'd previously have run a MaxDiff or choice-based conjoint study, you should now expect utility scores and simulated share to appear in the report by default. The new options are most valuable when you'd otherwise have written "HB analysis to follow" in a deck and lost a week to it. Quick reads on product configuration, share of preference across new offers, segment-level differences in what drives choice — all of these are now standard outputs that come with the survey rather than after it.

If you want the technical detail on the estimator, see the Utility and simulated share methodology documentation. For the export formats, see Utility Scores Export and Raw Draws Export. And if you'd like to talk through how this changes the way you'd run a study, get in touch.